The Top 10 Retail Surveillance Strategies for Success

Retail surveillance is becoming smart. The hybridization of traditional retail surveillance with AI-based video analytics is fast becoming the norm....

Read MoreHow Facial Recognition Works and How to Get Started in 2022

What is facial recognition? How does facial recognition work? We’re here to answer those questions and give you a better...

Read MoreDigital Transformation Trends: The Future of Business in 2022

Digital transformation is the implementation of using new technologies to improve existing processes or create entirely new ones. Companies are...

Read MoreCurrent & Future Digital Transformation Trends

Digital transformation has become a necessity for organizations that want to thrive in today’s business world. Such a process means...

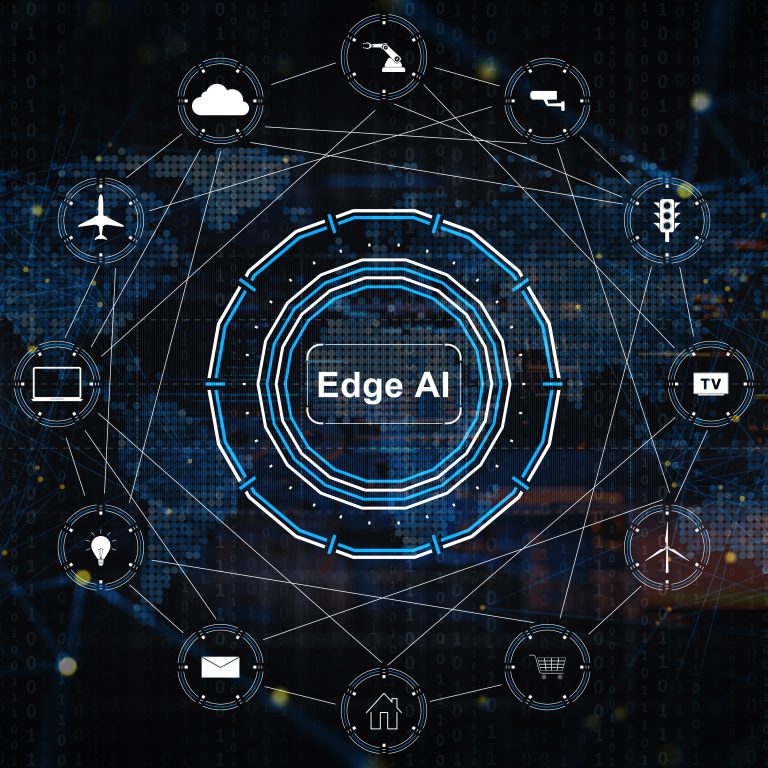

Read MoreGorilla Edge AI

Gorilla uses a combination of machine learning and deep learning through customizing MobileNet SSD, ResNet and more to provide various and highly accurate analytics in edge computing.

Gorilla’s intelligent and custom solutions drive automation and reveal insight to human operators.

Use machines to handle repetitive chains of events which require immediate responses.

Give staff the ability to see patterns hidden in human behavior.

Put the reach of the Internet of Things and the power of video analysis to work for you!

OpenVINO™ Optimized Edge AI

Gorilla’s IVAR® (Intelligent Video Analytics Recorder) is certified as the first IVA-based security software partner integrated with OpenVINO in Intel IoT Solutions Alliance. This collaboration enables Gorilla’s Edge devices to conduct one and a half times greater video feed real-time analytics without facilitating GPU’s. Gorilla’s clients will dramatically benefit with lower deployment costs as more video channels can be analyzed on the same hardware.